Whether you’re buying into the hype or doing your utmost to avoid it, the number of people turning to AI (artificial intelligence) for help and advice is growing at an incredible rate.

In February 2026, Demandsage released data showing that ChatGPT – the most popular AI engine – had 800 million weekly active users. A 100% increase over just 12 months; in February 2025, the number was 400 million.

Zoom out to look at monthly active users, and the numbers become even more impressive, with an estimated 5.72 billion visits in January 2026.

In the race to dominate, ChatGPT is leading the way

Here are some more eye-watering statistics about ChatGPT in 2026:

- It processes more than 2 billion daily queries.

- 10 million global users subscribe and pay for ChatGPT Plus.

- It leads the generative AI market with 81.13% market share.

- The app has clocked up 64.27 million downloads so far.

Remember, these figures only relate to ChatGPT. Other people are also embracing competitor AI chatbots, such as Claude, Google Gemini, Microsoft Copilot, Perplexity, and Grok.

Top 4 query categories on ChatGPT

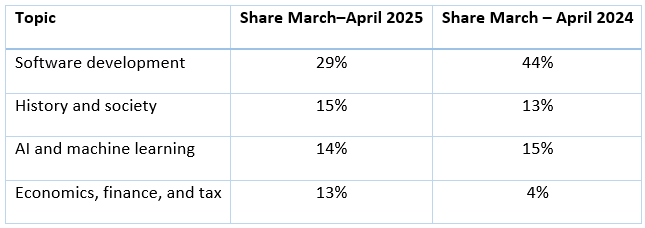

The table below shows the top four category prompts people are putting into ChatGPT.

Source: VisualCapitalist

One standout statistic is the increase in queries relating to economics, finance, and tax.

This data is supported by survey results reported by Sky News, which revealed that 40% of Brits have used AI for financial advice.

5 dangers of using AI for financial advice

1. It doesn’t know you and can’t take a holistic approach

If you’re already working with us, you’ll understand that financial advice relies on personal conversations to gain a deep understanding of what matters to you, your circumstances, and your goals.

When it comes to complex finances across different jurisdictions, it also requires some outside-the-box thinking – a long way from the type of generic response you’ll receive from AI algorithms, which rely on templates and models based on generic data.

Instead of understanding your unique circumstances, AI may provide generic or misleading suggestions that seem credible but aren’t appropriate for you. For example, it may suggest an investment strategy for a generic risk profile without accounting for life events you may have on the horizon.

2. It doesn’t understand or work within the regulatory framework

We are regulated by the Monetary Authority of Singapore and must abide by Singapore’s financial rules and fiduciary standards. And when we advise you, we must adhere to strict standards and procedures designed to protect customers against harm or bad conduct caused by firms.

AI, on the other hand, has no meaningful governance, and no professional obligation to act in your best interests.

So, if you follow advice from an AI engine and something goes wrong, there’s no recourse and no accountability.

In Singapore, the Monetary Authority’s proposed AI Risk Management Guidelines also emphasise the need for governance and oversight when institutions use AI, because unregulated use can harm consumers.

3. Your data may not be protected

To gain any kind of meaningful financial input from AI, you’ll likely need to share sensitive personal and financial information. But AI platforms don’t tend to have strong privacy or data protection standards.

This can leave you exposed to privacy breaches, misuse of your data, or even hacking.

While regulated financial planners are bound by strict data protection laws and overseen by regulators who can take action in the event of a breach, AI platforms may not be held to the same standards and often offer no such protections or accountability.

4. It can get even the basic facts wrong

You may have come across the all-encompassing excuse of AI’s ability to “hallucinate”. In other words, it has an uncanny ability to give you the wrong answer, and it does so with such confidence that it’s all too easy to take it at face value.

In fact, FTAdviser reports that AI only gets advice right half the time.

Asked 100 questions, AI tools were only correct 56% of the time, 27% of answers were deceptive or misleading, and 17% were just plain wrong.

- 52% of questions about investing and pensions were answered incorrectly.

- 70% of questions about major life events and purchases, such as buying a first home, an engagement ring, or a car, were answered incorrectly.

So don’t be fooled into taking confident AI responses as gospel.

Instead, ask a real expert – we don’t hallucinate, and are here to help you avoid making potentially costly mistakes.

5. No emotional intelligence, perspective, or ongoing human support

A good financial planner doesn’t just build a strategy to help you achieve your long-term goals. They also provide perspective when emotions threaten to derail your well-formed plan.

During market downturns, for example, it can be tempting to sell your investments to avoid further losses. We’re always on call and can help you step back, focus on the bigger picture, and avoid costly knee-jerk decisions.

Similarly, when a high-performing trend or “can’t-miss” opportunity dominates headlines, we can help you navigate the hype and assess whether it genuinely fits your long-term plan.

We’re here to help you stay focused during stressful times, offer encouragement, and bring a sense of perspective when you need it most.

If you’d like to speak to a real, human financial planner for reassuring personal advice, we’d be delighted to answer your questions.